If you are curious about large language models.

Table of Contents

Getting Started with LLMs

Some people are curious about LLMs, but unsure how to get started. Here are some pointers to learn a little bit about them while having a lot fun in the process!

Understanding LLMs

Two good references to begin understanding how they work internally:

- Best place to start, with a lot of great references: https://jaykmody.com/blog/gpt-from-scratch/ ( archive )

- Also interesting - different perspective, and touches a few things the other article does not: https://writings.stephenwolfram.com/2023/02/what-is-chatgpt-doing-and-why-does-it-work/ ( archive )

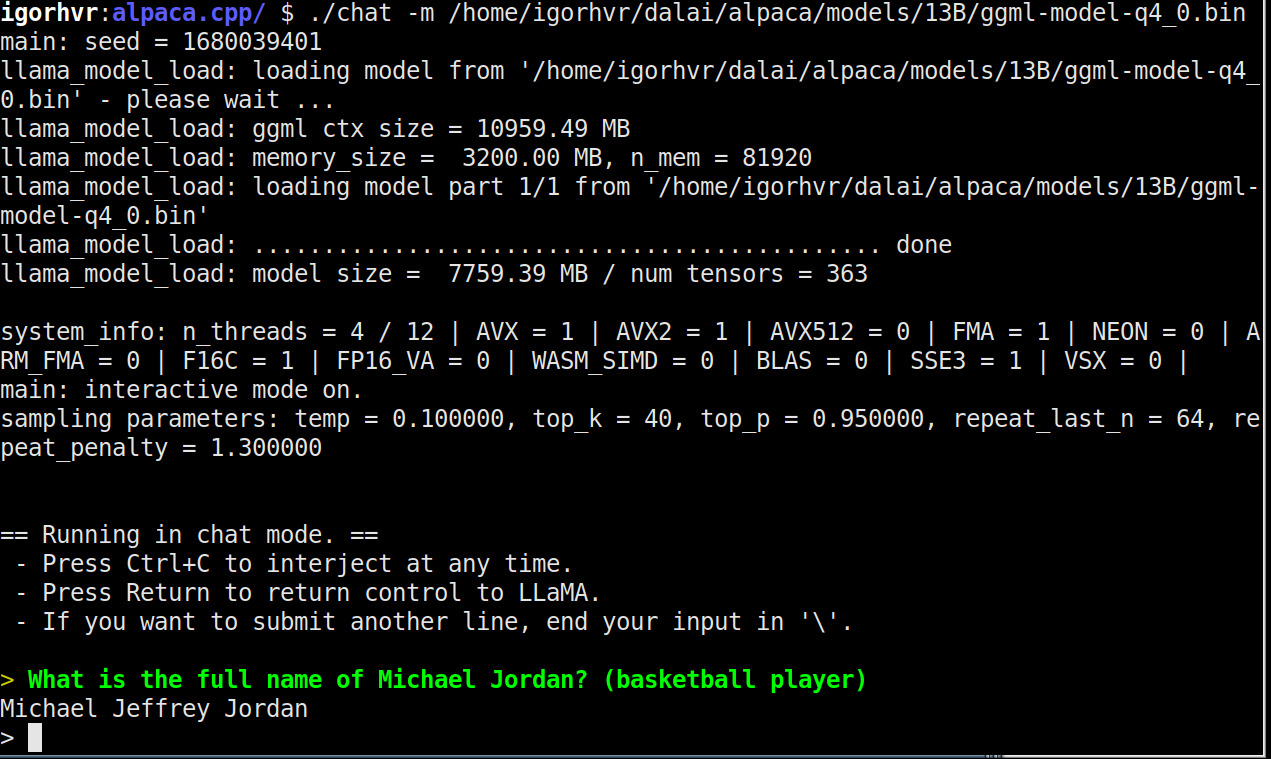

Running LLMs locally (in your laptop!)

You can run a very interesting model that was created by Facebook ( https://ai.facebook.com/blog/large-language-model-llama-meta-ai/ archive) and fine-tuned by a Stanford group ( https://github.com/tatsu-lab/stanford_alpaca archive ) locally. Amazingly it requires nothing more than ~10GB of RAM or so and a decent CPU. Soon enough we will have such things running in our phones…

- Install a recent version of node (18 or above).

- Run:

- Test this with the command npx dalai serve -> this will open a simple web interface under http://localhost:3000/

- Clone the following repository:

- Enter inside it and use make chat to compile.

- To run it in a chat interface use a command similar to

- Voilá!

npx dalai alpaca install 13B

This will take a while and will download the model (a multi-GB file).

https://github.com/antimatter15/alpaca.cpp archive

./chat -m /home/igorhvr/dalai/alpaca/models/13B/ggml-model-q4_0.bin

You will need to adjust your path to the place where the prior command npx dalai alpaca install 13B put your model.

Hey! This is worse than chatgpt4 by OpenAI!

True! Someone launched a couple of days ago (I saw it yesterday - https://news.ycombinator.com/item?id=35349608 archive ) an attempt to do better. I haven't had time to play with this properly (it does run/work :-) but maybe you can?

What about GPTJ?

If you want to play with GPTJ ( https://huggingface.co/EleutherAI/gpt-j-6B archive ) - a very interesting open alternative launched by https://www.eleuther.ai/ (archive) - there is a really great guide:

https://devforth.io/blog/gpt-j-is-a-self-hosted-open-source-analog-of-gpt-3-how-to-run-in-docker/ ( archive )

As a bonus it also teaches you how to use https://vast.ai/ (archive) - an unbelievably cheap way to run models (or whatever you want, really :-) in the computers of other people. If you have good hardware (and good video cards in particular) laying around you can also rent it there.